Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

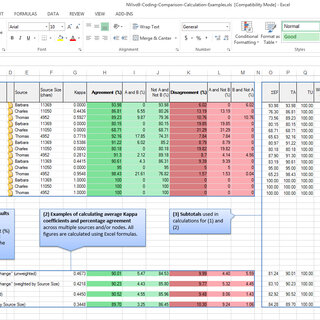

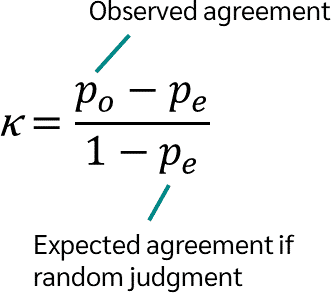

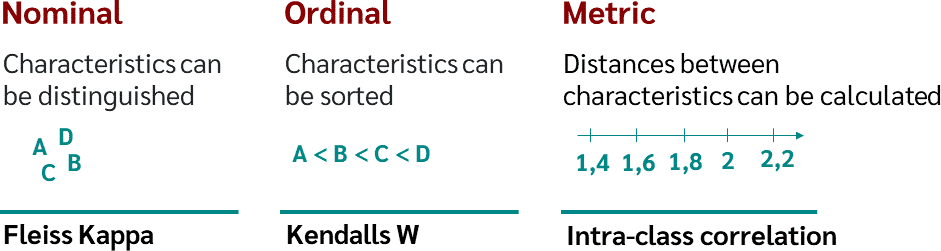

Fleiss' multirater kappa (1971), which is a chance-adjusted index of agreement for multirater categorization of nominal variab

PDF) Measuring agreement among several raters classifying subjects into one-or-more (hierarchical) nominal categories. A generalisation of Fleiss' kappa

![PDF] Analysis and construction of noun hypernym hierarchies to enhance Roget's Thesaurus | Semantic Scholar PDF] Analysis and construction of noun hypernym hierarchies to enhance Roget's Thesaurus | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/ba1334ecb74a635373e8a7da2c2d5bbfaed8618b/82-Table6.4-1.png)

PDF] Analysis and construction of noun hypernym hierarchies to enhance Roget's Thesaurus | Semantic Scholar

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

The Equivalence of Weighted Kappa and the Intraclass Correlation Coefficient as Measures of Reliability - Joseph L. Fleiss, Jacob Cohen, 1973

Comparison of Cohen's Kappa and Gwet's AC1 with a mass shooting classification index: A study of rater uncertainty | Semantic Scholar

![PDF] Sample-size calculations for Cohen's kappa. | Semantic Scholar PDF] Sample-size calculations for Cohen's kappa. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/c59f58e51e97eaa055b450d9f71cac402d7e45ad/3-Table1-1.png)